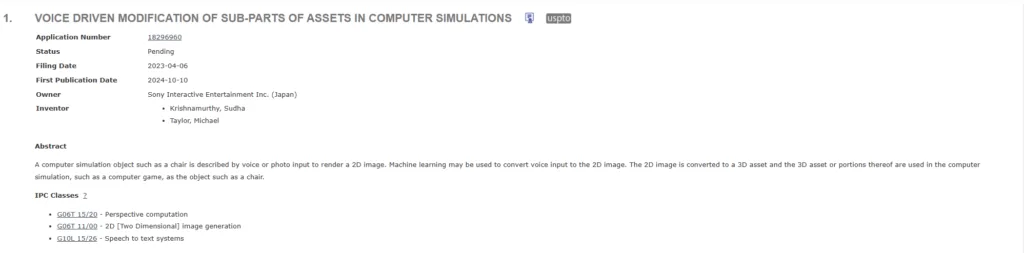

Sony seems to be taking accessibility in game development to a whole new level. The makers of PlayStation are working on a tech that allows developers to create and modify assets using voice commands which could lead to faster game development.

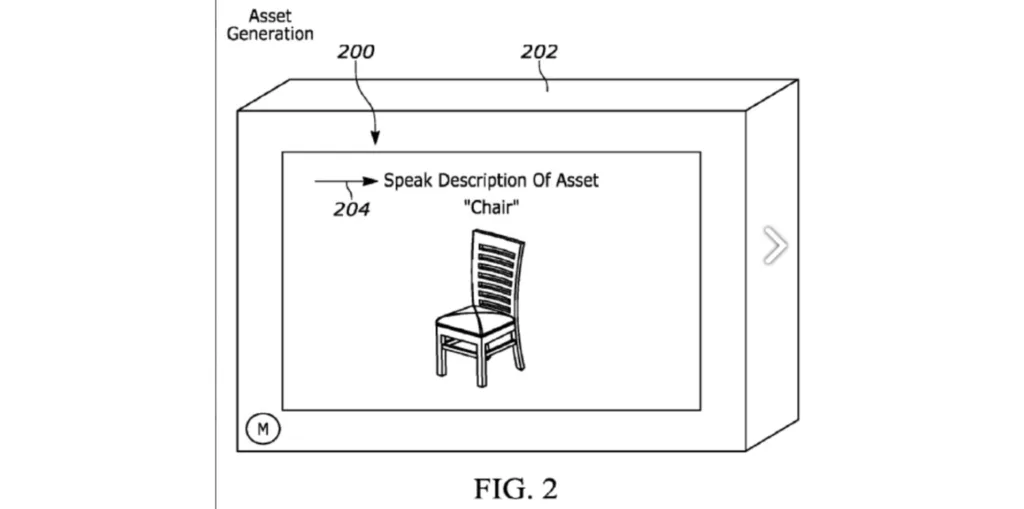

The technology allows a developer to describe an object, such as a chair, through voice or a photo. This input is used to generate a 2D image of the object.

Once the 2D image is generated, it can be transformed into a 3D asset. Machine learning may be involved in this process, aiding in the conversion from voice description to 2D and then to 3D.

The 3D object or parts of it can then be integrated into a computer simulation or video game. For example, a user could describe a specific type of chair, which would be rendered and then added to the game environment.

Since this is Sony’s trademarked tech, we can expect its implementation to be exclusive to Sony’s in-house engines used by Santa Monica, Guerilla Games, and others.

Through this tech, game developers can now quickly generate objects and assets by simply describing them, speeding up the prototyping phase and testing new ideas without needing detailed 3D modeling from the outset.

Future PlayStation games could not only be development faster but creation of new 3D assets in a cost-effective method.

Since many game development companies are now focusing on AI, I’m sure some form of AI could power this tech, but we’ll see when more details about this emerge.

Beyond Game Development

The use cases for this tech goes beyond video game development. In fact, this can possibly be integrated directly into games, allowing players to customize their environments and objects through voice commands.

For example, players could describe a specific type of chair or other furniture for a virtual home or base, and the game would render it in real-time.

Open world sandboxes can become more interactive as players could build or modify in-game objects with natural language.